jobert

[H]ard|Gawd

- Joined

- Dec 13, 2020

- Messages

- 1,575

DLSS is NOT simply running a lower res..."lower res"

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

DLSS is NOT simply running a lower res..."lower res"

Have one already. Currently running is a 3090,3070, 6800xt, and a 2080ti. This is the last system.The only reason here to go with the 6800XT---is simply to have something different. Performance is more or less a wash. And Nvidia wins on extra features such as Better RT performance, DLSS support, Nvidia Broadcast, industry leading video encoder quality.

I guess you haven't been following any rumors then because they're just going to increase that Infinity cache and stick with the same bus width. And the bus width is not even really important as it's the overall performance that you get from the GPU that matters. Most people even with higher end gpus are at 1440p not 4k so if anything AMD is the better performer for many due to the poor scaling and overhead that Nvidia has with ampere. It's just silly that I see so many people running a 3090 at 1440p especially with an older CPU. Hell I've even seen a few idiots running 3090s and 3080 Tis at 1080p.Simply a matter of bandwidth and Nv has the wider bus this round. AMD is working on that I'll bet

I run a 3090 on custom loop with a 1440P screen. Because I want to turn on every single option, ray tracing as high as I can if applicable, and still have 100FPS+. 1440P is still the sweet spot for that; everything on, perfectly stable performance. 4K you have to turn a few things down sometimes or use DLSS. Also, not a good 32ish” 4K screen out with the specs I want for a reasonable price: the god monitor from Asus is really the first, and that sucker is 5k. Big TVs don’t count; I do other things in my system and 200% scaling to make text readable sucks.I guess you haven't been following any rumors then because they're just going to increase that Infinity cache and stick with the same bus width. And the bus width is not even really important as it's the overall performance that you get from the GPU that matters. Most people even with higher end gpus are at 1440p not 4k so if anything AMD is the better performer for many due to the poor scaling and overhead that Nvidia has with ampere. It's just silly that I see so many people running a 3090 at 1440p especially with an older CPU. Hell I've even seen a few idiots running 3090s and 3080 Tis at 1080p.

Wow your eyes are worse than mine.I run a 3090 on custom loop with a 1440P screen. Because I want to turn on every single option, ray tracing as high as I can if applicable, and still have 100FPS+. 1440P is still the sweet spot for that; everything on, perfectly stable performance. 4K you have to turn a few things down sometimes or use DLSS. Also, not a good 32ish” 4K screen out with the specs I want for a reasonable price: the god monitor from Asus is really the first, and that sucker is 5k. Big TVs don’t count; I do other things in my system and 200% scaling to make text readable sucks.

4K screens 100% scaling is ~tiny~. I have 20/20 vision. Unless you’re sitting close, it’s not usable for coding/text/etc. and if you’re sitting close, the screen sucks for anything else like gaming. Screen real estate isn’t useful f you have to make everything big enough to see that it eats up the same amount of physical space or more than a smaller screen. Or are you moving 3-4 more ft away to play a game?Wow your eyes are worse than mine.

That claim wasnt made.DLSS is NOT simply running a lower res...

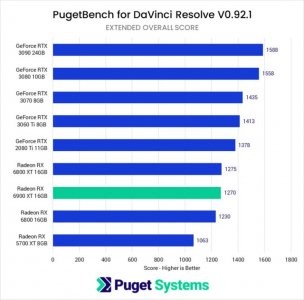

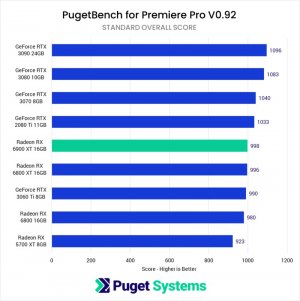

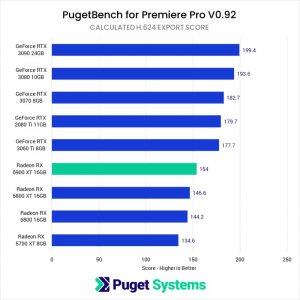

CUDA tends to be more supported than OpenCL. But amd has better linux drivers if you’re doing that.What about 6900XT vs. 3090? For 90% productivity work? DaVinci Resolve, Premiere Pro, Lightroom, various NLEs with lots of plugins, etc.

Don't people in this niche hobby buy these expensive graphics cards for those wooooooooooww moments though when technology advances? 4K, VR, RTX, etc.RT is the new G-Sync Nvidia needs something to cram down our throats marketing wise. Ultimately will you notice it on vs off probably not, are you going to keep it on in a high performance multiplayer game probably not. But hey you get to brag about how awesome Control and Cyberpunk look, woooooooooooow :/ Seriously surprised people are clinging onto RT as much as they are, gotta love fanboys would love to see tech reviewers do a wide scale Nvdia vs. AMD "blind" comparison test.

This and in 2 parts, take say 100 people, 5 best implementation of RT-DLSS gameswould love to see tech reviewers do a wide scale Nvdia vs. AMD "blind" comparison test

I notice it on, and yes, control looked amazing with it on. I don’t play multiplayer games anymore, it’s just another visual feature to enjoy making things look more lifelike or better. And before you call me a fanboy, I have both nvidia and amds latest cards.RT is the new G-Sync Nvidia needs something to cram down our throats marketing wise. Ultimately will you notice it on vs off probably not, are you going to keep it on in a high performance multiplayer game probably not. But hey you get to brag about how awesome Control and Cyberpunk look, woooooooooooow :/ Seriously surprised people are clinging onto RT as much as they are, gotta love fanboys would love to see tech reviewers do a wide scale Nvdia vs. AMD "blind" comparison test.

Too many reviewers are wasting time with canned benchmarks and not even looking at the game or even playing it. It can be laughable when you see some of the reviewers playing a game and how poorly they play meaning they have not even played it while toting a given line. My views:This and in 2 parts, take say 100 people, 5 best implementation of RT-DLSS games

4 tests, trying to lock 60 fps:

1) Rt on

2) Rt off

3) Rt off pushing the graphic in a way to have the same FPS than Rt on

4) One test that is the exact same than the other 3, just to judge people saying that something look better than something else, how much is reel versus how much it is them trying to perform in blind test.

Same for RT+DLSS, DLSS alone.

We could do some 1080p vs 1440p vs 4K at the same time. One difficulty of the test cost wise would be that you arguably need to live a while in the game with RT on to feel something is off when it is turned off, the difference can be subtle, but like some CGI versus some real footage in movie, maybe some part of some brain feel it.

I am bit surprised if it has never been done.

NVidia invested a fortune in AI and it make a lot of sense to have unified production with the pro card being almost the same has the gamer card, need to find usage for it in game, regardless of the worthiness of it, which open the window. Relooking today at the 3d card world when the Voodoo 2 card got released, I feel like the giant amount of hype I had has a kid for it was not fully justified (TNT was a june 15 1998 release, vodoo 2 a february one).

Maybe you need HDR and my cheap 400 nits one and I think I am able to feel the difference, but I would like that experiment on the nicest hardware to see how much there is something there.

Was is nice for people that work with low cost regular budget, is that we have ridiculous optix rendering and other stuff a low price in that model, but it seem still up in the air how long it would take to be significant on the player side of things.

3090, I have both. I actually think the 6900xt is better in some gaming circumstances but if it’s not what you’re doing.What about 6900XT vs. 3090? For 90% productivity work? DaVinci Resolve, Premiere Pro, Lightroom, various NLEs with lots of plugins, etc.

Possibly better with a 2080 super/3060ti than a 6900xt for most of thoseWhat about 6900XT vs. 3090? For 90% productivity work? DaVinci Resolve, Premiere Pro, Lightroom, various NLEs with lots of plugins, etc.

I have a 3090 but I’d take the 3080ti over it for most things.No brainer.

If the 3080ti was around at launch of 30xx I would have one instead of a 3090.

Dont get me wrong, I love the 3090 but I'm not normally a Titan purchaser.

Why? Is that just because of the lower cost of the 3080 Ti?I have a 3090 but I’d take the 3080ti over it for most things.

For me, it would have made sense in November when pricing was normal.Why? Is that just because of the lower cost of the 3080 Ti?

AMD is solid, but if you care about ray tracing or DLSS, then go with Nvidia.I've now sold my 3080 and am dithering between a 6900x for ~£1200 or an RTX 3080 Ti for £1300. Decisions, decisions...

Ram temp, size of card. I have a full coverage block and the 3090 is just slightly too large for the case.Why? Is that just because of the lower cost of the 3080 Ti?