https://www.extremetech.com/computing/nvidia-reportedly-sold-500000-h100-ai-gpus-in-q3-aloneAny details on this, any of the LLM groups getting ready to bring up something seriously impressive?

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

https://www.extremetech.com/computing/nvidia-reportedly-sold-500000-h100-ai-gpus-in-q3-aloneAny details on this, any of the LLM groups getting ready to bring up something seriously impressive?

We all are an AI hallucinating reality.Can't wait for AI to hallucinate reality

If it was more than in the past, but insider selling must be quite normal when the price is good and can be a vast array of reason, from kids going to expensive college to wanting an island. Insider sell was higher according to the graph early 2023, just before the price exploded.but yea it's a bad sign.

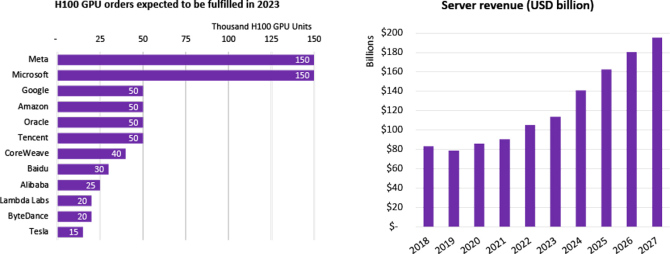

Isn't already baked in the stock price that the current situation is exceptional, the 2 last quarter revenues were ridiculous earth shatering good the price did not move, current price is not higher than mid july, they had those Q2 and Q3 result since.The big orders are done

It's more based around the idea that Nvidia has shipped so many units that they are locked in, the big players aren't going to spend hundreds of millions on hardware building a platform around it, to then ditch that platform in the next generation and change to a different hardware and software solution.If it was more than in the past, but insider selling must be quite normal when the price is good and can be a vast array of reason, from kids going to expensive college to wanting an island. Insider sell was higher according to the graph early 2023, just before the price exploded.

Isn't already baked in the stock price that the current situation is exceptional, the 2 last quarter revenues were ridiculous earth shatering good the price did not move, current price is not higher than mid july, they had those Q2 and Q3 result since.

I think Nvidia is going back to a graphics company again soon. I know MooCow already noted this, but yea it's a bad sign.

https://twitter.com/TrackInsiders_/status/1729863849354883325

View attachment 617187

Fuck, I sold my shares at 300I've been saying since PS5's release:

Buy PS5 for gaming & invest the extra cash in nvidia shares

(Was true since much before that, I guess)

I wouldn't call it serious competition. Competition, but not too serious. NV holds a huge lead and doesn't appear to be complacent as far as I can tell. Could they falter, sure. At this moment they are the market leader for the foreseeable future.

Somethings change fast. Somethings don't. Nvidia like it or not did the real work of wiggling their software into place.Bear in mind that a lot of companies were caught flat-footed and likely haven't dedicated the resources to this yet, either. Nvidia has a huge lead because they started working on this before people even thought about it. Now, EVERYONE and their mother is thinking about AI. Apple is making really competitive chips these days which wasn't a thing a few years ago. Google makes tensor cores, which suck compared to Nvidia, but they also have deep pockets and could put a lot into engineering to try to catch up. Nvidia is dominating the API space with CUDA, but Facebook is like "yo, wanna try Llama? It's open source", which might generate competition there.

Nvidia is embarrassingly ahead of the competition right now and there's no signs, at this point, that they'll be giving up dominance any time soon, but a lot changes really quickly in tech, particularly once other companies with deep pockets figure out what's going on and start committing a ton of resources to it.

The other side of it is just look at how much silicon they did manage to ship, and not small chips either those things are huge, could AMD have managed to put that much product out without cutting back on other parts?Somethings change fast. Somethings don't. Nvidia like it or not did the real work of wiggling their software into place.

I think unfortunately at this point. All the software side talent out there is spread thin... and those folks are all trained with NVs software and tools. (and the young talent sees the NV solutions as superior no top tier talent is going to a AI firm not using NV at this point) I mean it might not be that hard for Intel and AMD to whip out hardware that =s Nvidias. I don't think Nvidia is doing anything that revolutionary really outside of dedicating a ton of silicon space to things like tensors. Software side however, that gets a lot more tricky. Someone said it earlier in the thread and I have to agree... even if AMD and Intel at this point best Nvidia on the hardware side for even a few generations it won't be enough. To unthrone Nvidia at this point would require essentially the rest of the industry to build competing software standards that actually worked... and then it would take another 5 years before it there was enough well trained talent out there to make large inroads possible. (and if the young hot gun type talent has their pick they are still likely to pick firms using NV)

As much as I dislike Jensen... he has that industry by the short hairs. They don't plan to just sit back hardware wise, and they are entrenched software side hard. Intel and AMD both screwed up hard.

Just goes to show how many full dies they where pumping out... the 4090 dies are essentially cast offs. They dedicated an insane amonut of fab space making those AD102s.The other side of it is just look at how much silicon they did manage to ship, and not small chips either those things are huge, could AMD have managed to put that much product out without cutting back on other parts?

I mean at times the supply of the 4090s was thin but never out of stock to the point where people (outside of China) were going the scalper route, and certainly not to the degree it was during the height of the Crypto miners.

The H100's don't use AD102, the big servers that all the data centers are blowing each other over are the GH100 which is an 812mm2 monster in a proprietary SXM5 socket format.Just goes to show how many full dies they where pumping out... the 4090 dies are essentially cast offs. They dedicated an insane amonut of fab space making those AD102s.

This is why I also believe Nvidia is willing to leave the gaming market. Those 4090 dies are technically not in datacenter parts. Things like the L40 are still using fully operational silicon... it might be clocked a bit lower but all cores are active. At this point I really don't understand what is stopping Nvidia from taking all those 4090 dies and making a step down AI accelerator. They resisted that this gen. But I really doubt they will when blackwell launches. I would be shocked if NV sells 90% operational Blackwell chips into a consumer gaming part. Those are going to go into a step down datacetner offering at this point. Which I think is exactly what Jensen is signeling. He does fully intend to leave the high end gaming market. From there I think the first time he can't "Win" the gaming benchmark race with mid teir silicon Nivida just leaves. I suspect 4090 supers... and if Intel and AMD are looking weak maybe a 5090 with silicon that in years past would be sold as a 70 class card. If Intel and AMD look like they will take the lead... ahh Nvidia no longer has time for gaming, all hands on the AI deck.

And as it progresses and gets easier to use and more ubiquitous then more things use it that didn’t and the market expands.GPT 3 was trained on fuckin Voltas. The new new LLM's are yet to be built and the current ones are making massive undeniable accelerations to workloads that render you uncompetitive without them in some industries.

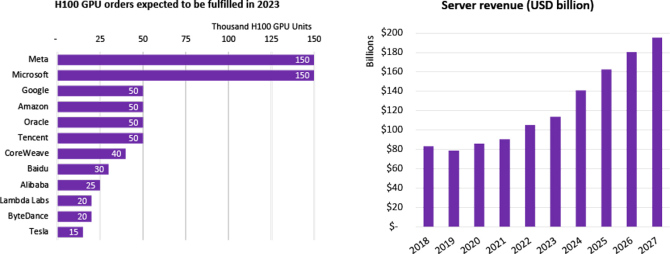

But the thing is while training is a bitch, if you build something with practical application inferencing at scale is worse. If Co-pilot gets better, and its already at the point where some shops won't let you work without it, Microsoft has to keep scaling up datacenters as inferencing scales, even if the models get smaller and more efficient, Jevons Paradox kicks us all in the balls. (the fewer resources a task needs, the more resources you spend on that task)