Snowknight26

Supreme [H]ardness

- Joined

- May 8, 2005

- Messages

- 4,435

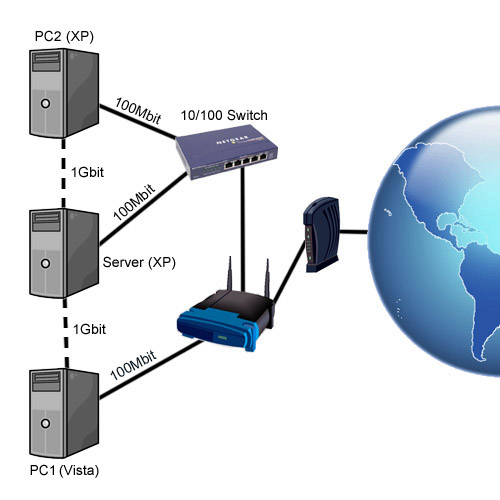

I've been having some problems lately with multiple network connections under Vista (apparently a similar PC running XP shows no troubles) for some time now. To start off, heres a little picture:

Connections that are always present/never removed are represented by solid lines, connections that are either added or removed are represented by dashed lines. These dashed lines are also direct connections. They're on a different subnet so only those two connections (computers) are 'visible'.

Assume that all 3 computers have at least 2 ethernet ports.

With all that out of the way, lets cut right to the chase. I wanted to be able to connect PC1 to Server via a gigabit connection because it was a pain copying files to and from both computers via the 100Mbit way. To fix that, I added a gigabit line between PC1 and Server, which is where the problems arose.

When the 1Gbit connection between PC1 (Vista) and Server is active, most, if not all applications want to use that connection (even though they can't because its Local only), when they should be using the 100Mbit connection to the router (which is Local and Internet). However, when the 1Gbit connection between PC2 (XP) and Server is active, only local connections to Server go through it while all other connections use the 100Mbit connection, which leads me to believe that theres some change between Vista and XP.

Is there any way to make all local connections to Server from PC1 go through the 1Gbit line and all other connectionsuse the 100Mbit connection, like the PC2 <--> Server connection?

Basically I want all connections to \\Server go through the 1Gbit connection while all others like \\PC2 and WAN traffic go through the 100Mbit connection.

Connections that are always present/never removed are represented by solid lines, connections that are either added or removed are represented by dashed lines. These dashed lines are also direct connections. They're on a different subnet so only those two connections (computers) are 'visible'.

Assume that all 3 computers have at least 2 ethernet ports.

With all that out of the way, lets cut right to the chase. I wanted to be able to connect PC1 to Server via a gigabit connection because it was a pain copying files to and from both computers via the 100Mbit way. To fix that, I added a gigabit line between PC1 and Server, which is where the problems arose.

When the 1Gbit connection between PC1 (Vista) and Server is active, most, if not all applications want to use that connection (even though they can't because its Local only), when they should be using the 100Mbit connection to the router (which is Local and Internet). However, when the 1Gbit connection between PC2 (XP) and Server is active, only local connections to Server go through it while all other connections use the 100Mbit connection, which leads me to believe that theres some change between Vista and XP.

Is there any way to make all local connections to Server from PC1 go through the 1Gbit line and all other connectionsuse the 100Mbit connection, like the PC2 <--> Server connection?

Basically I want all connections to \\Server go through the 1Gbit connection while all others like \\PC2 and WAN traffic go through the 100Mbit connection.

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)