Sir Psycho

Limp Gawd

- Joined

- May 29, 2020

- Messages

- 176

I had to double check I wasn't on Reddit...

Follow along with the video below to see how to install our site as a web app on your home screen.

Note: This feature may not be available in some browsers.

To be fair, GDDR6 is cheaper than GDDR6X. Also, Nvidia actually had more memory chips on their 3080 than AMD has on any of their 6800/6900 series cards.Yea, no... not even close. When AMD can produce a similar card with near double the vram for less. That's a tight margin without knowing the actual margins.

And? Are you suggesting the margin on the 3090 is tight??To be fair, GDDR6 is cheaper than GDDR6X. Also, Nvidia actually had more memory chips on their 3080 than AMD has on any of their 6800/6900 series cards.

The 3090 has 24 GDDR6X memory chips. That has to be pretty expensive.

Decision to be made: 3080 vs 6800xt

I don't care about Ray Tracing, nor streaming, nvenc.

Some games, like doom eternal, and many others are already maxing out 8gb. 8gb is today clearly not sufficient (am talking about vram usage, not just allocation), let alone in 2 years.

Back to the 3080, and the question of will 10gb be enough for many years to come? I don't plan to swap my gpu every two years. I plan to keep it 3-5 years.

For those who think 10gb is enough today, will it be enough in 3+ years? Do you have stats of vram usage to support your arguments?

Using the Ultra-Ray Tracing preset with Cyberpunk 2077 at 4K, w/DLSS set to balanced w/HDR and the Cinematic RTX launch parameter the game has used upwards of 13GB of VRAM on my RTX 3090 FE. Obviously, that's beyond 10GB now and is encroaching on the limits of even the 16GB cards such as the 6800XT and 6900XT. However, they aren't fast enough at Ray Tracing, nor do they support DLSS 2.0 or an equivalent. Therefore, I'm not sure what the VRAM usage would look like on one of those cards.

Same here. I think he is reporting allocation.^ I can run CP2077 at 4k HDR, ultra RT, balanced DLSS on my 3080 without issue. FPS is 45-50 with no issues related to insufficient VRAM. I play it on DLSS performance to get to 55-60 fps instead but it is quite playable on balanced. Another case of allocation != required

Not sure what the cinematic RTX launch parameter is, so that may change things.

Curious how you are measuring VRAM usage? My understanding is you can't measure it unless you get down to the driver level. Apps such as HWINFO only report allocation.

^ I can run CP2077 at 4k HDR, ultra RT, balanced DLSS on my 3080 without issue. FPS is 45-50 with no issues related to insufficient VRAM. I play it on DLSS performance to get to 55-60 fps instead but it is quite playable on balanced. Another case of allocation != required

Not sure what the cinematic RTX launch parameter is, so that may change things.

Yea, no... not even close. When AMD can produce a similar card with near double the vram for less. That's a tight margin without knowing the actual margins.

Same here. I think he is reporting allocation.

^ I can run CP2077 at 4k HDR, ultra RT, balanced DLSS on my 3080 without issue. FPS is 45-50 with no issues related to insufficient VRAM. I play it on DLSS performance to get to 55-60 fps instead but it is quite playable on balanced. Another case of allocation != required

Not sure what the cinematic RTX launch parameter is, so that may change things.

He said near 10GB of VRAM. If it isn't going over your VRAM capacity then you aren't going to run into issues...

I never said you would have an issue. The allocation reported by GPU-Z on my RTX 3090 was only 9.5GB of VRAM. If you bothered to fully read what i wrote, you'd also have realized I've enabled the cinematic RTX mode via a lunch parameter which alters the game's lighting. This is in addition to running the game with HDR, DLSS 2.0 set to balanced and at 4K using the same RT Ultra preset. This increases VRAM usage considerably, or at least allocation. After doing that, GPU-Z reports 12.5-13GB of VRAM usage.

Nope. He said 13 GB.

If you bothered to fully read my post you’d see that I had the caveat that I don’t know what cinematic RTX is and that may change things.

Yes I did. I also later mentioned running the game without the cinematic RTX mode. Without using that, GPU-Z reports 9.5GB of VRAM usage at 4K, w/HDR and DLSS 2.0 set to balanced.

See the edit.

Fair enough! Wonder what it reports at quality DLSS (playable on 3080 to console peasant used to 30 fps) and native 4k (unplayable on both)

Ah. That is allocation. That isn't usage.Without using that, GPU-Z reports 9.5GB of VRAM usage at 4K, w/HDR and DLSS 2.0 set to balanced.

We spoke to Nvidia’s Brandon Bell on this topic, who told us the following: “None of the GPU tools on the market report memory usage correctly, whether it’s GPU-Z, Afterburner, Precision, etc. They all report the amount of memory requested by the GPU, not the actual memory usage. Cards will larger memory will request more memory, but that doesn’t mean that they actually use it. They simply request it because the memory is available.”

Fair enough! Wonder what it reports at quality DLSS (playable on 3080 to console peasant used to 30 fps) and native 4k (unplayable on both)

Fair, but with the 3080 being a 102 die like the Ti cards usually are it was an odd decision.Isn't it pretty standard for nvidia to gimp the non-Ti models though? 1080 Ti was 11GB and 2080 was 8 GB. At least the gap is closer between the 2080 Ti and 3080. Still dumb though, I agree.

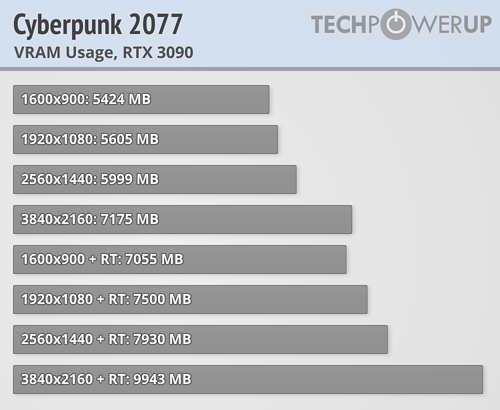

View attachment 316473

9.9GB at 4K native with RT on according to TPU.

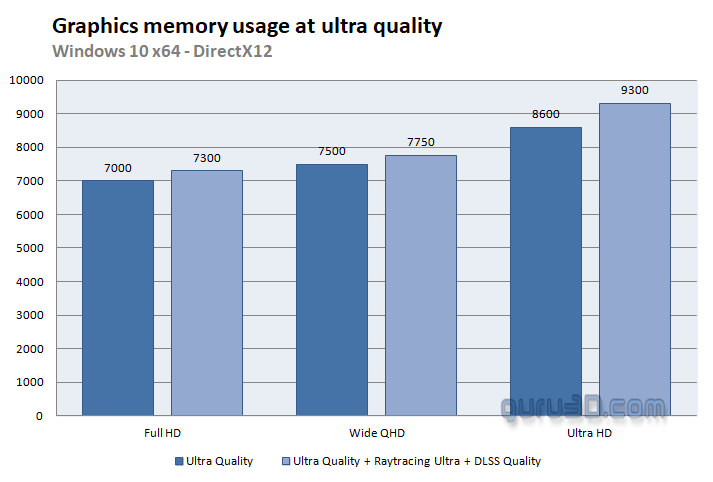

View attachment 316474

9.3GB at 4K Quality DLSS and RT on according to Guru3D.

Isn't it pretty standard for nvidia to gimp the non-Ti models though? 1080 Ti was 11GB and 2080 was 8 GB. At least the gap is closer between the 2080 Ti and 3080. Still dumb though, I agree.

It is, but it has fewer CUDA cores and a reduced memory bus. It's gimped compared to the RTX 3090. Supposedly the RTX 3080 Ti's GPU will actually be identical to the GPU found on the RTX 3090, albeit with unknown clocks.Fair, but with the 3080 being a 102 die like the Ti cards usually are it was an odd decision.

In my neck of the woods, it's more like a GT 710 or HD 7550.Think this thread is moot and needs to be archived for 6 months because the most GPU you can buy right now is a 1650 4GB without paying scalpers...

I get what you are saying. But considering they were marketing the 30-series during announcement as a replacement for Pascal owners, it seems odd to me that 1080 Ti owners would be happy going from an 11GB card to a 10GB card effectively 3 years later. If you actually look at the FE PCB for the 3080, they omitted 2 memory chips, so it is possible for them to have made it a 12GB card over a 384-bit bus from the start. I honestly think if they did that there would have been less stink about VRAM capacity. And the 70 class cards being 8GB for 3 generations seems silly to me.It is, but it has fewer CUDA cores and a reduced memory bus. It's gimped compared to the RTX 3090. Supposedly the RTX 3080 Ti's GPU will actually be identical to the GPU found on the RTX 3090, albeit with unknown clocks.

Lazy coding? I don't know.Why would a game try to allocate more VRAM than it needs ?

Same reason enterprise Java apps and SQL and Oracle does. If mapped by the app, you don’t have to worry about something else claiming it, and it’s way easier that way.Why would a game try to allocate more VRAM than it needs ?

Oh yeah that reminds me of this one place I worked at. They had a SQL process that was just a run-away memory leak over time. Periodically had to reboot it or it would grab all possible RAM.Same reason enterprise Java apps and SQL and Oracle does. If mapped by the app, you don’t have to worry about something else claiming it, and it’s way easier that way.

1. I wonder how they measured vRAM used if none of the tools on the market (as quoted by the nVidia rep) report actual usage - only allocation.View attachment 316473

9.9GB at 4K native with RT on according to TPU.

View attachment 316474

9.3GB at 4K Quality DLSS and RT on according to Guru3D.

I do sizing for those for a living. If you don't right-size a SQL VM and just give it a bunch of RAM, it will use it all. So you increase it, and it "uses" that. If you check in the DB, 1/10th of it is actually in use - MSSQL service just claimed all the ram because it was thereOh yeah that reminds me of this one place I worked at. They had a SQL process that was just a run-away memory leak over time. Periodically had to reboot it or it would grab all possible RAM.

Same reason enterprise Java apps and SQL and Oracle does. If mapped by the app, you don’t have to worry about something else claiming it, and it’s way easier that way.

I do sizing for those for a living. If you don't right-size a SQL VM and just give it a bunch of RAM, it will use it all. So you increase it, and it "uses" that. If you check in the DB, 1/10th of it is actually in use - MSSQL service just claimed all the ram because it was there

I am not sure we ever saw this happen (i.e. is there any game that show VRAM usage going up to 20-22 gig with a 3090).Same concept for some of these games: they allocate all available vram regardless of what they’re going to use. That’s exactly the point we were trying to make. Actual usage is hard to measure.

Probably up to a certain maximum; 3090 is the first consumer card with that much on it. Have to manage allocated Ram, after allI am not sure we ever saw this happen (i.e. is there any game that show VRAM usage going up to 20-22 gig with a 3090).

Mayve some allocate more and place more in VRAM when there is more available, (i.e. less intelligence of will I need those data during that scene versus after the next loading), but allocate all available VRAM ?

8GB got problematic when i ran monster hunters texture pack at 1440p with max settings. So I wouldn't trust 10GB for future games. You're definitely safer with 16GB

Games definitely can allocate more VRAM than they actually need, we have seen this numerous times and various sources have reported on it.

https://www.resetera.com/threads/vram-in-2020-2024-why-10gb-is-enough.280976/

https://www.gamersnexus.net/news-pc/2657-ask-gn-31-vram-used-frametimes-vs-fps