DeadlyAura

Supreme [H]ardness

- Joined

- Jun 6, 2005

- Messages

- 4,851

Hey all, I picked up at T430 from a buddy whose company no longer needed it after moving to cloud servers. The system was in production up to the day he gave it to me. The only thing he did was remove the drives.

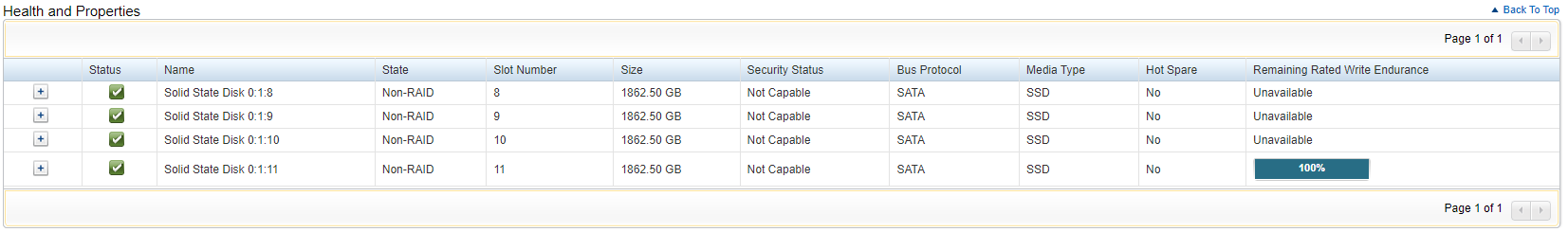

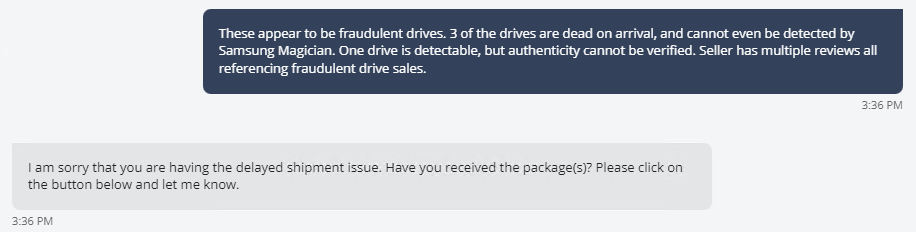

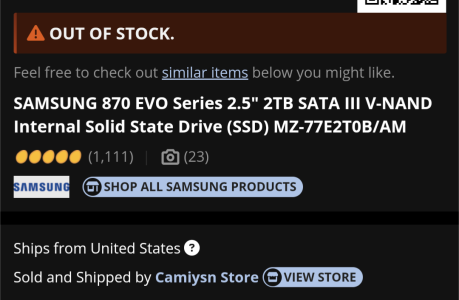

I purchased 4, brand new Samsung Evo 870s from NewEgg and installed them in the server. I can configure a RAID 10 array, initialize it, and install the operating system (Server 2019). However, when it reboots, it gets to "initializing firmware interfaces" and proceeds to show that 3 of the 4 SSDs have failed.

I have tried:

Reseating the drives

Updating BIOS

Updating RAID controller firmware

Swapping positions of the drives (the "failed" states follow the drive itself, not the position)

Swapping drive bays entirely (Moved drives from bays 0,1,2,3 to bays 8,9,10,11)

Removing the RAID controller, all cables, backplane, etc. cleaning all connections and reinstalling them

Moving the RAID controller to another PCI slot

Regardless of this, the same 3 disks show as "failed" upon trying to do a first boot of the OS.

When I power down completely, and cold boot the system, all 4 drives show "ok" but the array does not contain bootable data / is degraded and has to be rebuilt.

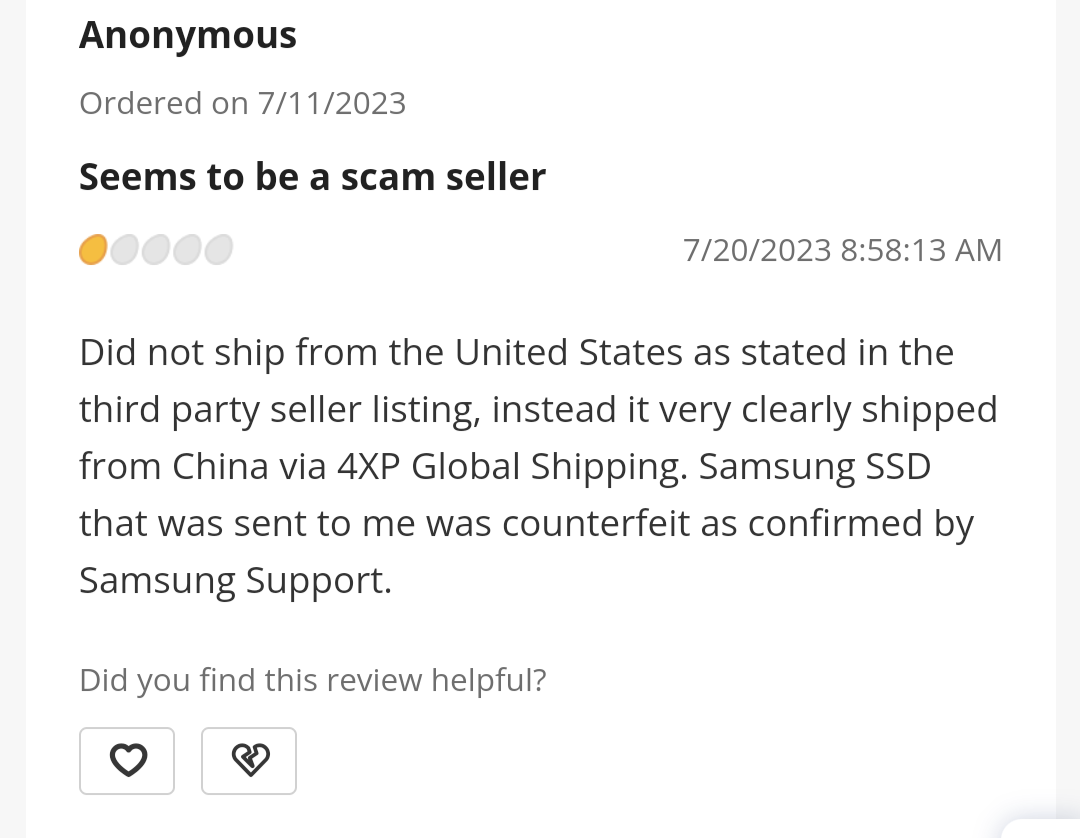

After all of this, it would appear to me that I have 3 defective drives out of the box. However, I am EXTREMELY skeptical as I have NEVER had a Samsung Evo fail me, let alone be bad out of the box. And, for 75% of the drives to be defective out of the box, I feel like the odds of that are lower than winning the Powerball.

Am I missing something here?

I purchased 4, brand new Samsung Evo 870s from NewEgg and installed them in the server. I can configure a RAID 10 array, initialize it, and install the operating system (Server 2019). However, when it reboots, it gets to "initializing firmware interfaces" and proceeds to show that 3 of the 4 SSDs have failed.

I have tried:

Reseating the drives

Updating BIOS

Updating RAID controller firmware

Swapping positions of the drives (the "failed" states follow the drive itself, not the position)

Swapping drive bays entirely (Moved drives from bays 0,1,2,3 to bays 8,9,10,11)

Removing the RAID controller, all cables, backplane, etc. cleaning all connections and reinstalling them

Moving the RAID controller to another PCI slot

Regardless of this, the same 3 disks show as "failed" upon trying to do a first boot of the OS.

When I power down completely, and cold boot the system, all 4 drives show "ok" but the array does not contain bootable data / is degraded and has to be rebuilt.

After all of this, it would appear to me that I have 3 defective drives out of the box. However, I am EXTREMELY skeptical as I have NEVER had a Samsung Evo fail me, let alone be bad out of the box. And, for 75% of the drives to be defective out of the box, I feel like the odds of that are lower than winning the Powerball.

Am I missing something here?

Last edited:

![[H]ard|Forum](/styles/hardforum/xenforo/logo_dark.png)